Adding FP32 processing definitely doesn't hurt either. I'm totally in favor of returning FP32 for an FP32 input. It hasn't for me (yet).Īlso, please don't misunderstand me. However, in this scenario, the main memory is more of a staging area for the GPU anyway (although pre-processing happens there) and as long as it provides a large enough buffer to feed the GPU it's no problem. Does anyone have a good scenario? For me, the only time I can't fit everything into main memory is in our deep-learning work. I'm actually a little curious if storage is an issue people face. In some sense, moving towards cython means moving away from SIMD at least at the moment. If it does happen, we can indeed get a nice boost from FP32, but making it happen in a predictable way in the current codebase is a PITA. For cython, there is some implicit support whenever the function happens to be written in such a way that the compiler can auto-vectorize it. Where we rely on numpy for speed, it can help however, we opt for cython implementations to speed things up. This turns SIMD into a bit of a painful topic.

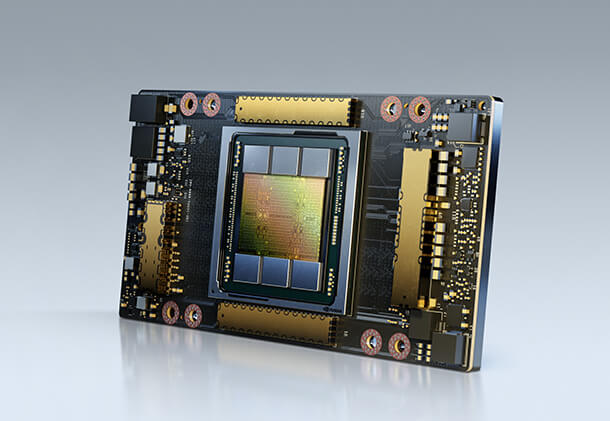

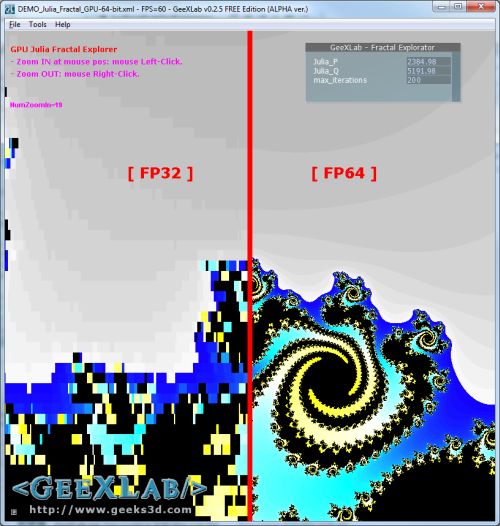

I'm actually a big fan of SIMD, but this is again not something that, to my knowledge, skimage doesn't explicitly aim to support mainly for reasons of maintainability. On the other hand, as far as I am aware, extending to the GPU isn't on the roadmap for skimage, so I'm hesitant to give this argument a lot of I also agree with the SIMD argument, i.e., that one can fit more numbers into the register and get a proportional speedup. Ampere adds FP32 (and FP64 support in their A100 line), but processing happens with "reduced precision" (me thinks bfloat16). In fact, in this line of thinking it is probably much better to add FP16 support or consider the new and shiny bfloat16 since this puts us into the realm of tensor cores. This also makes storing in FP64 format a little undesirable. #3439: cython I totally get the argument in a GPU computing context, as a GPU tends to have many more single-precision FPUs than double-precision FPUs (if any).

#3936: denoise_bilateral, denoise_tv_bregman #5220: trics: structural_similarity previously merged PRs related to single precision support #5219: skimage.restoration: wiener, unsupervised_wiener, rolling_ball #5204: skimage.registration: phase_cross_correlation, masked_phase_cross_correlation #5199: issue regarding adding float32 support to measures functions open issues and PRs related to dtype handling PR #4307 adds float32 support to moments_hu and infers precision from the input for the default dtype=None, but also allows overriding this default. This is what I implemented for phase_cross_correlation and masked_phase_cross_correlation in #5204. I am generally in favor of preserving the floating point precision of the input (although usually promoting float16 to float32). For those two it defaults to dtype=float32. We have some functions such as optical_flow_ilk and optical_flow_tvl1 that use a dtype argument to control the precision. should we generally provide a dtype argument to allow the user to override the default?.When inferring floating point dtype for integer inputs, should we use the result of np.promote_types(image.dtype, np.float32) or always use np.float64? promote_types promotes 8 and 16-bit integers to float32, but larger integers to float64.should we infer floating precision based on input dtype?.There are corner cases such as non-local means using the integral image approach where accuracy considerations require internal use of float64, but in most cases float32 precision is acceptable. I opened this issue to track PRs related to float32 support and discuss best practices to make behavior more uniform across functions. Some functions in scikit-image support single precision and many can potentially do so with minimal modification.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed